"Savage Love" Alexa Skill

Overview

This Amazon Alexa skill allows a user to ask Alexa to play back the "Letter of the Day” from Savage Love, a popular dating and relationship advice column. These letters are typically short, so my thought was that users could listen to the letter during interim moments between other activities, or when they have a few moments to spare before or after larger events in their day, perhaps as they’re getting their breakfast ready before work, or when they’re waiting for dinner to finish.

In all honesty, I wasn’t sure that the skill would be something anyone would actually want to use. So I developed a “minimum viable product,” crucial component of the Lean Startup Methodology, to test the app with actual users and learn as much as possible before spending too much time actually coding it.

I knew that Alexa sounded quite natural in short bursts—perhaps a few sentences at a time—but that the speech it produces isn’t perfect. Since this application would require Alexa to read back, on average, two to three minutes of synthesized speech per letter, would users become irritated? Would the colloquial language and acronyms often found in the letters and responses be read back incorrectly by the Alexa engine, confusing users to the point where they lose track of what’s being read to them? Rather than diving right in with development of the skill, I made a prototype that I could test on others and try to find answers to these questions.

Approach and Design

When creating Voice User Interfaces (VUI), it is arguably even more important than in graphical user interfaces (GUI) to provide feedback to the user, telling them what actions they can take at what time, and assuring them that they’re in the right place. In contrast to graphical interfaces, in which standards and conventions that most users are familiar with have arisen over time, VUIs are still novel and unfamiliar to many people, so extra guidance is needed.

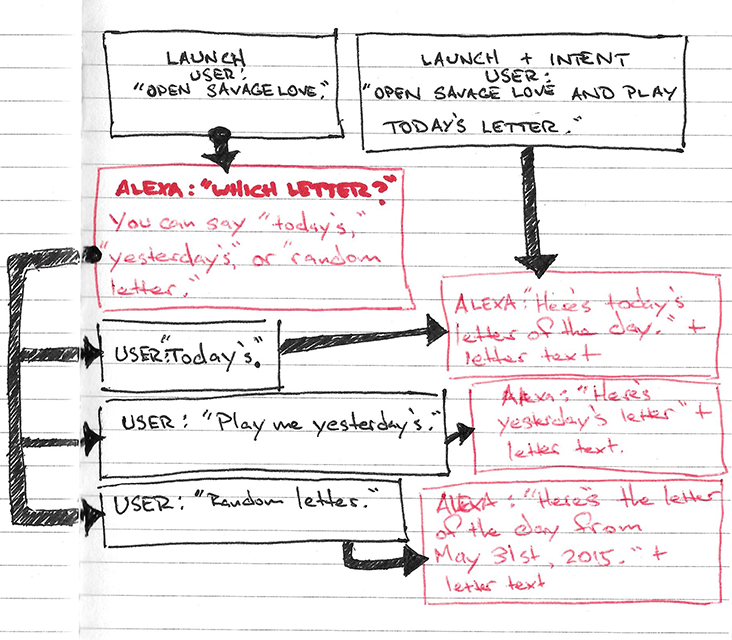

For example, a user might say “Open Savage Love,” but they might not specify their intent, because they aren’t sure what the possibilities are. In this case, they have to be informed of their options, but without being overwhelmed by them. No more than three possibilities should be presented to the user at a time, so if the user opens the skill without saying what it is they want to do, Alexa tells them they can “listen to today’s letter,” “listen to yesterday’s letter,” or “listen to a random letter.”

If the user is already familiar with the skill and does know what they want to do, they can open it and say their intent all at once. They might say, for example, “Open Savage Love and play the letter of the day for today.” The chart below shows the basic interaction a user can have with the skill:

Alexa skills require the following components:

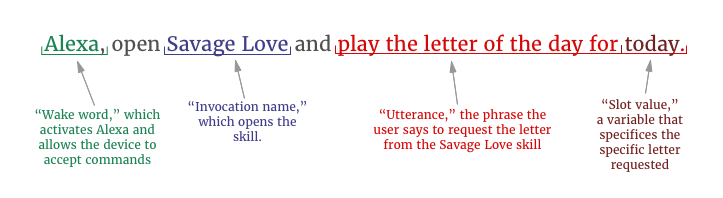

- An Invocation Name, which the user says to open and use your skill. In this case, the invocation name is “Savage Love.”

- Intents, or “actions that users can do with your skill,” specified in the skill's code. In this case, the intent is

getletteroftheday, which, upon request, gets a letter and plays it back to the user. - Utterances, which are phrases the user says to invoke these intents; for example, “play the letter of the day for today.” Utterances also contain slots, or possible values—in this case, “today.” If the user had requested yesterday’s letter, the slot value would be "yesterday."

- Code that accepts interpreted speech data from Alexa and performs the necessary actions. In this case, this is the code I wrote to get the requested letter and return it to Alexa to be read to the user.

So, the Alexa engine will listen to the user's request, and break it down as follows:

Because there are so many different ways people can ask for the same thing, utterances need to be provided to the Alexa service by the developer to ensure that Alexa correctly interprets what the user is asking for. I tried to think of as many different ways that a user might ask for the letter of the day as I could. Of course, I wouldn't know for sure how people would make their requests until I tested, but I used these 70 as a starting point.

Creating the Prototype

Creating prototypes for voice user interfaces (VUI) poses more of a challenge than creating them for graphical user interface (GUI). Whereas Sketch, Photoshop, InVision, Adobe XD, and others are all available to quickly create visual prototypes, similar VUI prototyping options are not yet available.

Therefore, some programming was necessary in order to conduct user tests, though I did try to simplify as much as possible. For instance, rather than writing code to actually find letters for today, yesterday, and a random day in the past, I simply hard-coded the skill to play back particular letters (so, for instance, if a user asked for a "random" letter to be read to them, the letter for January 14 would always play back, rather than a truly random letter). This dramatically cut down on development time, while still allowing me to sufficiently test the skill’s functionality and observe how users interact with it. The code is admittedly very rough, and it was a little difficult not to spend time refactoring and optimizing it, but my intent was to write something that was good enough to test as quickly as possible as an exercise in rapid prototyping. View the prototype code here.

I used the RSS feed for Savage Love to get the letters, and I used node.js and several node packages including alexa-app, feedparser, and striptags to write the prototype.

The skill functions as follows:

- The user requests a letter. For example “Alexa, open Savage Love and play the letter for today.”

- Amazon/Alexa interprets the speech as outlined above, and sends the information to my service for processing.

- My service gets the RSS data from The Stranger’s RSS feed, gets the letter for the requested date from this feed, formats it (stripping html tags and other extraneous information out), and sends the information back to the Alexa server.

- Alexa reads the letter to the user.

User Testing

I presented users with the following simple scenario:

“You want to listen to a Savage Love Letter of the Day. Ask Alexa for it.”

I had to provide some basic guidance on how to interact with Alexa for users, some of whom were totally unfamiliar with it. I informed them that in order to ask Alexa to do something, they have to use the wake word “Alexa,” and that the invocation name “Savage Love” had to be used to open and interact with the skill.

But I was especially careful not to give them specific words to use to access letters, since I wanted to try to collect as many natural utterances as possible. There are hundreds of slight variations in the way people can ask for the same thing, and unlike in GUIs where the visuals (ideally) provide affordances to the user, immediately showing them what the possibilities for interaction are, things are not so apparent in voice interfaces. So I provided minimal guidance to users during testing, so I could determine if the affordances programmed into the skill provided enough information to the user.

Results were as follows:

- The 70 utterances I started with didn't cover all the different ways people asked for letters. But Alexa was able to understand some of the requests anyway, and in cases where the request couldn't be recognized, the reprompt I included ("I didn’t hear that. Would you like to hear today’s letter, yesterday’s, or a random letter?") was sufficient to guide the user to make a request that could be understood by Alexa.

- There was an approximately 10 second delay between the user’s request for a letter and the response. This could be due to the way the prototype’s unoptimized code was written. After about 4-5 seconds, users expected a response, and hearing none, usually repeated their request. An initial acknowledgement of the request needs to be given that lets the user know their request has been received and is processing (a VUI equivalent to a loading animation). The light around the top rim of the Alexa device does glow blue when processing, but since Alexa is a voice-powered device, visual feedback doesn’t make much sense, since users will most likely not be looking at the device when interacting with it.

- Everyone used “partial intents” at first—meaning they said something to the effect of “Open Savage Love and play a letter,” without specifiying which letter. Handling of partial intents has to be dealt with better. Currently, the response in this case is the generic error message, “I didn’t hear that. Would you like to hear today’s letter, yesterday’s, or a random letter?” The “I didn’t hear that” phrase needs to be removed in this case.

- Alexa’s voice unfortunately does become irritating to some users after a minute or so of uninterrupted speaking. The synthesized voice, while intelligible and quite convincing at times, does not always pause naturally, and is often thrown off by the slangy language found in the letters. Alexa’s sometimes strange inflection can make the letters a bit difficult to follow. In particular, there needs to be more of a separation between where the question ends and the response begins.

- The subject matter of the column deals with sexual issues, and some users found it a bit silly to hear a synthetic voice speaking about this kind of subject matter.

- In the column, advice-seekers sign their letters with a phrase that forms a relevant acronym (e.g., one letter was signed “Meditating on Moneymaking,” which forms the acronym "MOM," so the advice-seeker was addressed as “MOM” throughout the response). But for users unfamiliar with this convention, this was confusing and unclear—users weren't sure why Alexa kept saying "Mom."

- If there’s no letter of the day for today, how should this be handled? Is the most recent letter found? Alexa should say something like, “There’s no letter for today, but here’s the most recent letter.”

Next Steps and Final Thoughts

The consensus seemed to be that, with some improvements, regular readers of the column might find this skill useful. Only one of the test participants (out of four) had read Savage Love before, and she was a sporadic reader. After making improvements, I hoped to test it with regular readers.

Unfortunately, I hadn’t considered that I wouldn’t be allowed to publish this skill on Amazon for others to download and use, since I don’t have legal permission to distribute the column. Amazon is quite strict about ensuring that skills don’t make use of any unauthorized intellectual property. Luckily, since this was developed as a minimum viable product, I hadn’t spent too much developing it. As it turns out, this product is probably not viable, but due to unforeseen legal reasons, rather than it not being especially useful.

I plan to contact The Stranger, the publisher of Savage Love, to see about getting permission to publish the skill, but I’m not particularly optimistic that I’ll get it. However, developing and testing the skill was a very valuable learning experience, and the possibilities for voice-controlled applications are very exciting. In this specific case, requesting the letter of the day just by asking for it is far easier and more natural than looking it up on the website or newsreader on one’s computer or mobile device. It also allows the user to multitask, quickly accessing and consuming the letter while remaining free to do other things simultaneously.

Many industries, such as travel, see voice-based interaction as the "next frontier" in computing. The challenge, they say, will be dealing with the "unstructured queries" of voice-based computing. Indeed, even in this Alexa application, which was quite simple, users often phrased their requests in unexpected ways. Therefore, careful design of VUIs, based on extensive research and testing, will be crucial to allow users to accomplish their desired tasks while still maintaining the natural, conversational interactions that make voice-based computing so appealing.